Introducing Arize: An Observability Platform for Production AI

June 23, 2020

Ashu Garg

Our longstanding thesis at Foundation Capital is that all companies of scale are undergoing a self-reinvention, transforming themselves from software-assisted businesses into automated enterprises. For the past several years, enterprises have been trying to integrate artificial intelligence into nearly every business function, from marketing and finance, to ops and customer service.

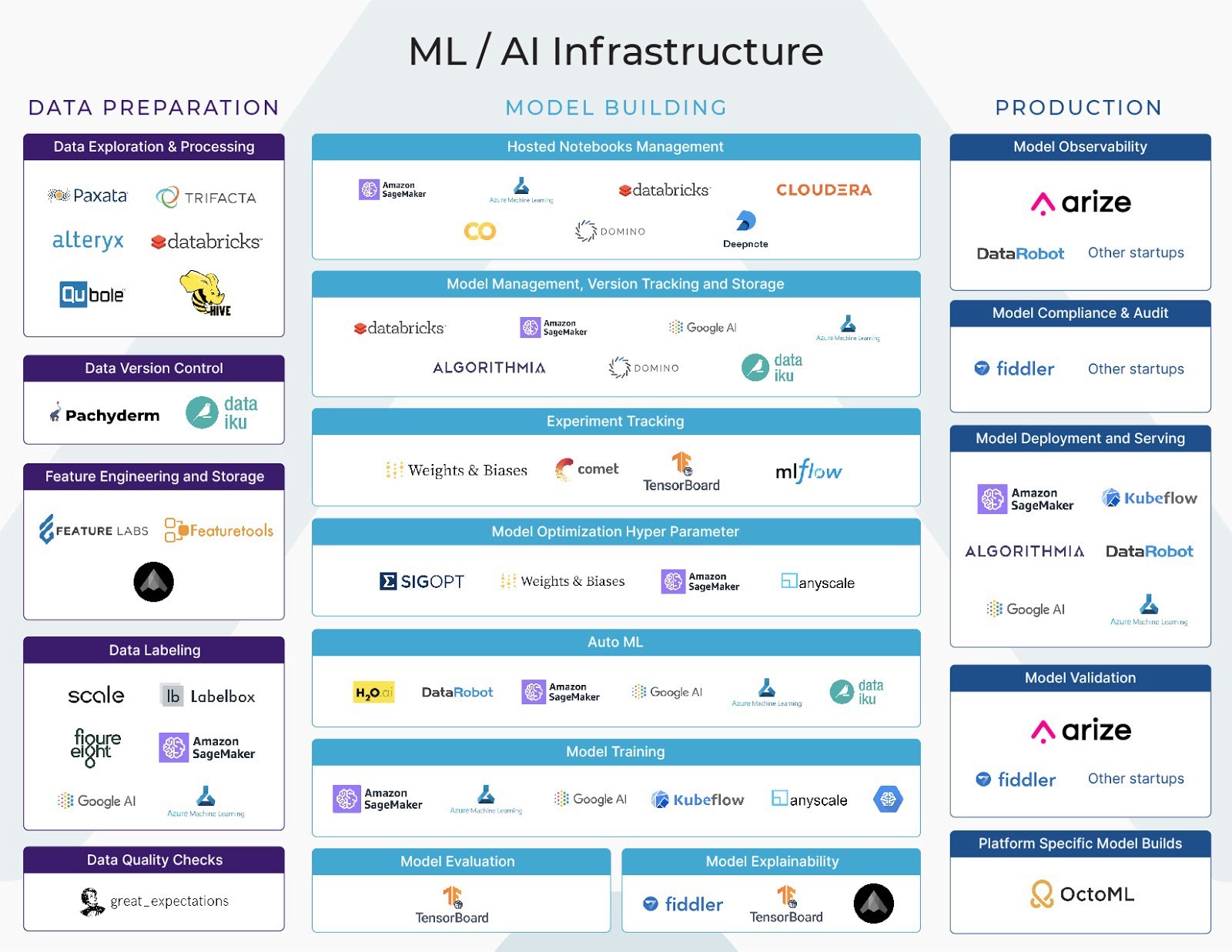

Most of the efforts, in this regard, have been focused on the first two stages of the machine learning workflow: data preparation and model building. Amazingly, what happens in the final stage, when the ML models go into production—that is, into real-world use—is largely left up to chance.

Are the models making accurate inferences? How far off are their predictions? Do they even bloody work? The dirty little secret in AI/ML is that once organizations deploy them IRW (In Real Work), how machine learning models behave is basically a guessing game—even for the engineers who built them. It’s like the toys in Toy Story coming to life when the humans aren’t watching, except that instead of having colorful adventures in which they learn heartwarming lessons about friendship and the impermanence of life, AI models are making split-second decisions about who qualifies for loans, determining which individuals warrant greater scrutiny from law enforcement, hitting the brakes on self-driving cars. Critical decisions that have real-world impact on peoples’ lives. And yet … no one really knows what the models are doing.

ML models are as imperfect as the people who build them—they make mistakes like people, they inherit their makers’ biases. Without a mechanism to troubleshoot and debug what models do when they’re deployed in the business, and to monitor their performances over time, companies risk significant business consequences. That’s why ML “observability”—monitoring and explaining the performance of AI models in production—is a nascent but critical category. Which is the reason I’m happy to announce that we’ve led the first round of funding in Arize AI.

Arize is an observability platform built specifically for explaining and troubleshooting production AI. While there are currently end-to-end solutions for data collection and cleaning all the way through the model deployment stage, AI in production is a blackbox, for which engineers lack any real tooling. ML models are built and deployed into different services with no real-time insight into their performance or downstream effects. Without observability and real-time analytics, data scientists can spend countless hours triaging a problem, pouring over anomalies, and trying to identify potential problems. Is the issue with the data, or with the software, or is it the model itself? The only way to pinpoint what went wrong, is for ML engineers to write an ad hoc script or file, grasping in the dark for insight, then get a new version of the model deployed that hopefully reduces the problem. Or else it’s back to the drawing board.

It’s a primitive, worst-practices process that comes at the cost of inordinate hours of their most valuable employees’ time, customer dissatisfaction, and millions of dollars lost due to under-forecasting models. If machine learning is going to be integrated into every business and function, then the infrastructure that enables it must improve.

Enter Arize AI. Arize’s singular mission is to help models succeed in live environments by offering a tool that tracks model deployment down to the business-metric impact. Think New Relic or Datadog for ML. The platform is built for the ML engineers who put models into production and are responsible for debugging them if something goes wrong. With the Arize dashboard, they can monitor the predictions of ML models, diagnose and debug any issues without going back to square one, and explain what the models are trying to do.

Arize is led by CEO Jason Lopetecki and CPO Aparna Dhinakaran. I couldn’t wish for a better pair of cofounders to tackle the observability problem. Both hold EECS degrees from Berkeley. Both have deep experience building out and deploying thousands of AI models—Jason at TubeMogul, where he was a founding executive and built the company’s AI performance management system; and Aparna who led the development of critical components of Uber’s machine learning platform.

Both Aparna and Jason were also frustrated with having to amend and account for AI/ML problems at their companies, and were independently working to solve many of the same observability problems in deployed AI/ML. They joined forces when they realized they shared the conviction that the answer to the problems plaguing businesses using ML is a vertical solution: namely, software that can watch, troubleshoot, provide guardrails, and give insight back to the team on AI performance in the business. Or, as Jason wrote in Arize’s launch blog post, “We have a simple proposition in a complex industry. We are focused on one vertical, helping companies with their production ML and [doing] it immensely well.”

Speaking of shared adventures that lead to happy friendships, I’ve known Jason since 2010, when I invested in TubeMogul, a video-advertising software company, which he cofounded with Brett Wilson. Jason and I worked closely as he led strategy and innovation at TubeMogul. He, Brett, and their team built the company into a booming business, which went public, and, later on, was acquired by Adobe. It was at TubeMogul where I also first crossed paths with Aparna, during her stint at the company working on fraud detection and mitigation. So, I’m on a first-name basis with both their impressive technical prowess and their deep commitment to helping companies stand up ML models in the real world. The most gratifying, albeit rare, moments for investors like myself is to see someone succeed a second time; when Jason and Aparna came to me with their idea for a startup to make ML predictions explainable, actionable, and integrated with business metrics, I knew they would take me to my happy place.

The sweet spot for venture investors is backing a startup that combines a strong pre-existing relationship with the founders and a deeply held investment thesis. So I could not be more thrilled to be leading Arize’s first round of funding. Jason and Aparna are the real deal. They are building an essential component of the automated enterprise, which we here at Foundation Capital have been sketching out, speaking on, and investing in for a decade. Arize empowers companies with explainable analysis to fix models or upstream engineering issues; it will enable them to catch problems before customers see them, and give data scientists time back. Arize is the missing piece to making ML usable at scale in large businesses. So, when it comes to production AI, enterprises can keep hoping for the best as their strategy—or they can Arize.