On Friday, April 1st, 2016, I emailed Andrew Feldman to say that I'd be climbing over his back fence to hand deliver our term sheet to invest in Cerebras. It was April Fool’s Day, but I wasn’t fooling.

This was not, strictly speaking, a venture-firm best practice. But by then I had known Andrew for nine years, and we’d been riffing on his next company for nearly two. I was not about to lose the deal over a redlined sentence on a Saturday afternoon.

I first met Andrew in October of 2007, when he and Gary Lauterbach had just started SeaMicro. I passed on that round, but I really connected with the two—especially their first principles thinking—and I never stopped following them.

Meaningful relationships take time. So do meaningful companies. Today, from the outside, Cerebras is a ten-year company going public. From where I stand, it’s a nineteen-year relationship that finally gets to ring the bell.

Andrew and I in August 2019 at the Hot Chips conference on the Stanford campus, where Cerebras unveiled its first-generation Wafer-Scale Engine.

On deep relationships and unreasonable ambition

When AMD acquired SeaMicro in 2012, I had a hunch that Andrew would not last long inside a big company. He has, as I have said more than once, a massive chip on his shoulder and a heart full of disobedience. By early 2014, he was looking for the exit, and we started getting together to talk through what could come next.

At the time, two things were not obvious: that AI would be truly useful, and that the GPU was a suboptimal machine for it.

On the first question, the smartest people I knew were divided. AlexNet had arrived in 2012, and a few corners of the research community were starting to do magical things with convolutional neural networks. But in the broader software industry, AI was somewhere between a marketing buzzword and a science project.

The second question, about hardware, was barely being asked. GPUs had become the default for neural network training, mainly because researchers had accidentally discovered they were less terrible than CPUs. Creating a new computing system purpose-built for AI workloads meant betting against the architecture every researcher in the world was using.

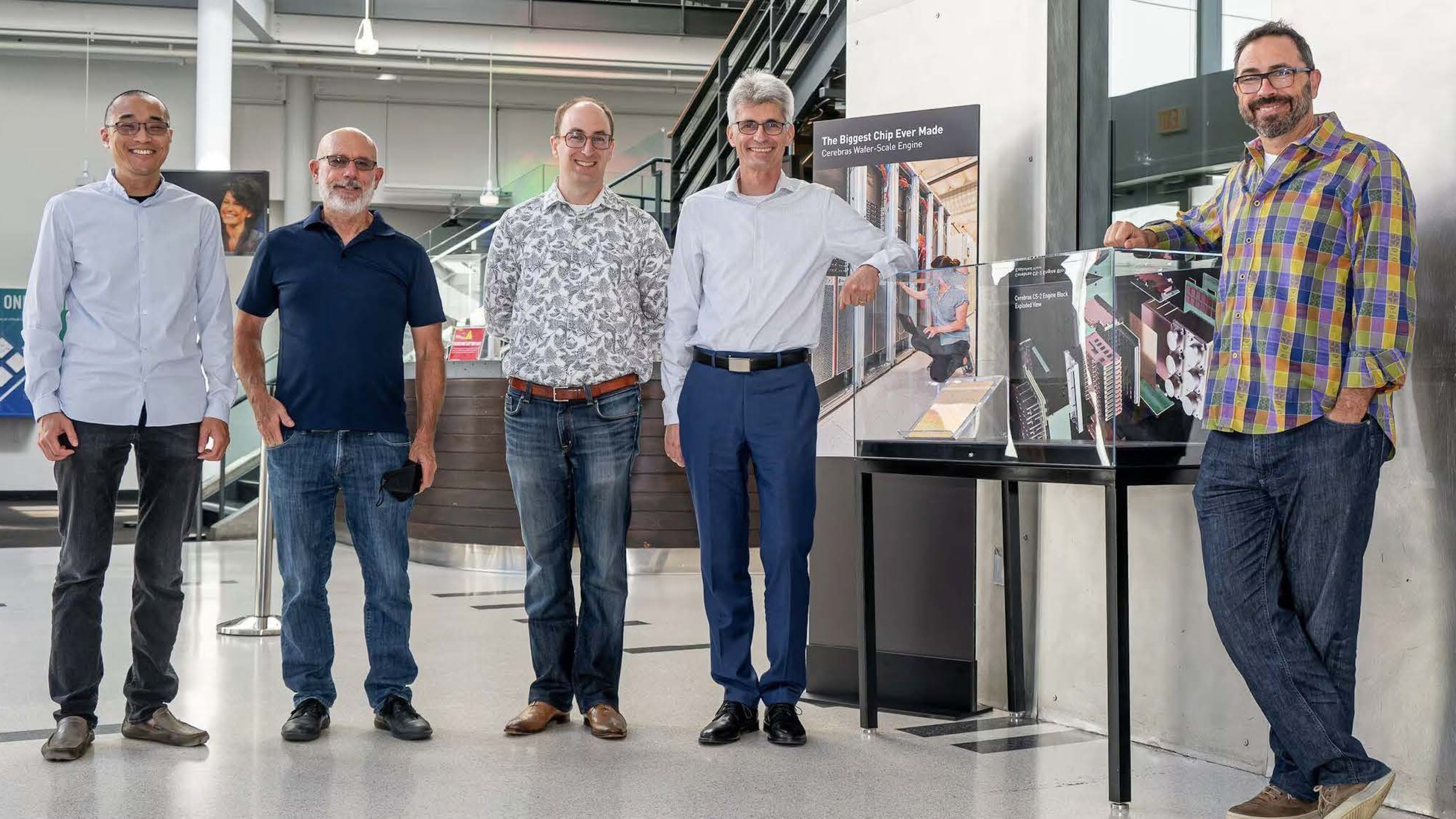

Andrew, Gary, and their co-founders, Sean, Michael, and JP, saw things differently. Collectively, they had been building chips and systems for decades: Gary drawing on his pioneering work in dataflow and out-of-order execution from the 1980s, Sean on advanced server architecture, Michael on software and compilers, and JP on hardware engineering. They were a rare combination of people—exceptional individually, but multiplicatively more so together—who could envision a new kind of computer.

They believed that if AI realized its potential, the resulting market would be vastly bigger than every existing form of computing combined.

They also saw the GPU for what it was: a battlefield promotion of a chip engineered for graphics. It was better than a CPU for parallel processing, but not what anyone would design for AI workloads if starting from scratch. Memory bandwidth, not raw compute, was the true constraint on what neural networks could achieve. That meant creating a chip optimized for the movement of data across a fabric, rather than the multiplication of matrices in isolated cores.

Internally, the decision to invest in Cerebras was far from consensus. Several of my partners had watched the previous wave of semiconductor investments deliver nothing but losses, and they were candid with their concerns. In the end, we came together as a team and, over that weekend in April 2016, we made it clear to Andrew that we wanted to be his first term sheet.

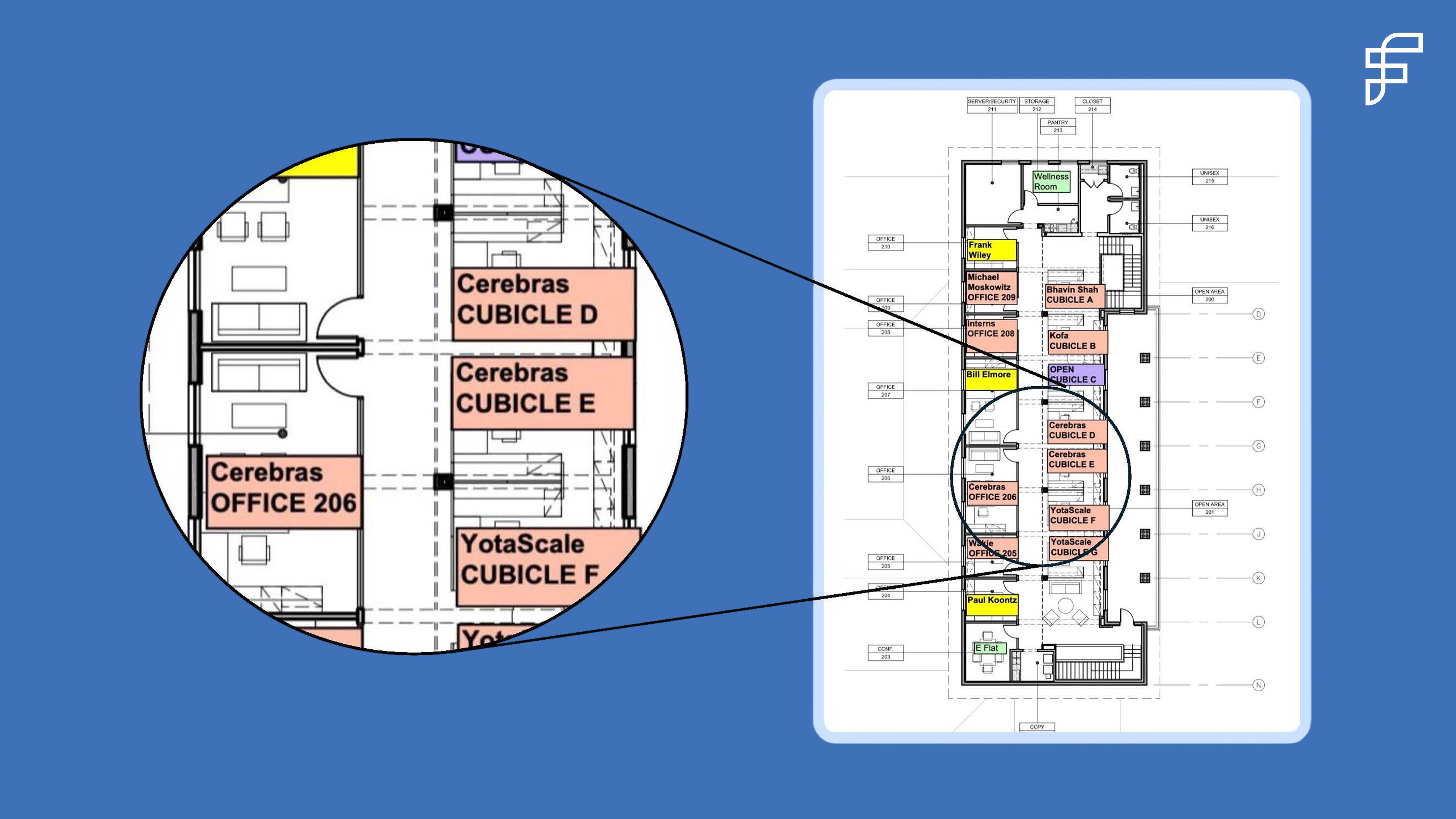

A few weeks later, Andrew, Gary, Sean, Michael, and JP moved into our EIR space on the second floor of 250 Middlefield. I still have the floor plan our office manager drew up at the time. On it, Cerebras sits next to one of Foundation's founders and a few doors down from Bhavin Shah, who would go on to start Moveworks. It was a good floor to be on.

Cerebras’s first HQ, on the second floor of our old office at 250 Middlefield.

Knowing which rules to bend, and which ones to break

Before Cerebras, the largest chip in the history of computing was about 840 square millimeters: a piece of silicon roughly the size of a postage stamp. Cerebras built a chip that was 46,000 square millimeters, 58x larger.

Choosing wafer scale meant also choosing every downstream design challenge that came with it. In the nearly 80-year history of computing, no one had made it work, which meant no one had ever needed to solve the problems that came from trying. How do you power a chip that large? How do you cool one? How do you maintain electrical continuity across tens of thousands of connection points?

To get to wafer scale, Cerebras had to invent new approaches to nearly every facet of modern computing at once: semiconductors, systems, data fabric, software, and algorithms. Each was a startup in its own right. Andrew and his team leaned into the gnarliest technical challenges first. One by one, these problems began to bend under the weight of their intense, untiring effort.

Every six to eight weeks, we gathered for a board meeting. They walked us through what they had tried since the last meeting: a new variant of the systems design, a new approach to power delivery, an adjustment to thermal management. They explained, with the hard-won lucidity that comes from repeatedly confronting a systems problem from every angle, what they thought had gone wrong and what they planned to try next.

We asked questions, then dug in alongside the team, bringing whatever people, resources, and relationships were needed to gain purchase on the problem. When we reconvened six to eight weeks later, the storyline repeated with a new technical issue: a new edge of the frontier to explore. Each solution revealed the next thing that needed solving.

Their first prototype went up in smoke on its initial power-up in what the team labeled a "thermal event," which is what one calls a fire 🔥 when one does not want to scare one's board (or landlord).

I had been doing the math on watts per square millimeter, partly out of curiosity and partly because the numbers seemed too high to be right. We called in the engineers at Exponent, the failure-analysis firm whose former corporate name was, appropriately, Failure Analysis. They confirmed that the power dissipation numbers were just as audacious as they looked, and they helped us think through a range of approaches that wouldn’t require suspending the second law of thermodynamics: the one argument Andrew is wise enough to avoid.

The discipline of an engineer is knowing which rules you can break, which ones you can bend, and which ones you have to respect. Andrew and his team had an earned sense of that distinction. They knew when they were arguing with convention, which they had set out to do, and when they were arguing with physics, which they had not.

When you’re building frontier technology, failures are inevitable. The only way to work through them is with discipline, persistence, and, above all, trust: trust in the mission; trust in each other; trust in the certainty that when the first prototype self-destructs, you’ll be back in the lab the next morning, starting on the next iteration.

There’s no transactional version of this work. There’s only the long version: staying in the room through the partial solutions and patient explanations, so that when it does finally succeed, you’re there to see it.

That moment came in August 2019. Andrew, Sean, and their team stood in the lab, watching an entirely new computer of their own design run for the first time. To the untutored eye, it was doing nothing visibly interesting: about as engaging, in Andrew's telling, as watching paint dry. What was different this time was that the paint had never dried before. They stood together for 30 minutes, then got back to work.

The people you build with matter

Some people pick problems based on what they know they can solve. Andrew picks problems based on what he believes is worth solving. Incremental iterations aren’t interesting to him: he wants a 1000x leap. From day zero, he set out to build Cerebras as a generational, n-of-one company.

Part of this drive is constitutional. Andrew describes it as the pathology of a computer architect who's been obsessed with an idea for decades, though I think of it more broadly as the pathology of a founder. He looks at a problem and asks whether he can build something to make it a step-function better. The next question he asks is whether anyone will care if he succeeds. If both answers are yes, he'll devote the next decade of his life to it.

Another part of this drive comes from his upbringing. Andrew grew up around brilliance the way most kids grow up around TV. His father, a pioneering professor of evolutionary biology, played Sunday doubles tennis with six men in regular rotation. Of the six, three won Nobel Prizes, and one a Fields Medal.

As Andrew tells it, these giants would patiently explain their work in physics, math, and molecular biology in words a child could grasp. He came away imprinted with what true brilliance looked like, and with the fact that it did not, as his mom put it, have to come with being a jerk.

I've come to think this is one of Andrew's defining traits, alongside his defiant ambition and bug-light instinct for finding the right problems. He has a deeply held belief that the most exceptional people he's encountered are also exceptionally kind.

This belief shapes how his teams come together to achieve incredibly hard things. The first 30 people he hired at Cerebras had all worked for him before; some of them have been with him since 1996. About 100 of the 700 people there now have followed him through multiple companies.

Cerebras’s founding team at the Computer History Museum in August 2022. From left to right: Sean Lie, Gary Lauterbach, Michael James, JP Fricker, and Andrew Feldman.

Importantly, kindness and competitiveness aren’t in tension. Andrew is fiercely committed to winning. He is, he likes to say, a professional David battling Goliath. Goliath moves slowly and watches for a head-on attack, which leaves every other approach open. David's advantage is showing up in ways and places that Goliath can't.

At SeaMicro, Andrew’s largest Japanese channel partner was NetOne. NetOne's primary supplier was Cisco, which entertained its partners on private planes and yachts worth more than most houses in Palo Alto. Andrew, on a more modest budget, invited NetOne’s CEO to his backyard for a barbecue. Later, the CEO told him that in decades of doing business with Cisco, he had never been invited to anyone's home. That seemingly small, human gesture—one that no Goliath would think to do—cemented their relationship.

From first term sheet to IPO

Celebrating with Andrew at NASDAQ.

This morning, Andrew rang the bell at NASDAQ. I was standing alongside him, ten years and 2,600 miles from where it all started in our office at 250 Middlefield.

Today, there are other rare founders doing what Andrew was, sketching on whiteboards at 3 a.m., wrestling with technical problems yet to be solved. They have their own massive chips on their shoulders and hearts full of disobedience. They're trying to figure out how to find a partner who will dig in alongside them when their first prototype won't power up, and who will stick around until it finally does.

These are the founders I want to back: the ones who pick problems worth solving, who dream of solutions that are 1000x better, and who grind through the inevitable challenges that come along the way.

For founders like Andrew, Gary, Sean, Michael, and JP, I'd happily climb over the back fence on a Saturday afternoon.